|

The findings show that the lower the perplexity, the better the SSA score of the model – strong correlation coefficient (R 2 = 0. Perplexity represents the number of choices the model is trying to choose from when producing the next token The lower the perplexity, the more confident the model has on the next token generated. The authors found that perplexity has a strong correlation with the SSA evaluation metric. SSA is very labour intensive and so we need to have an automatic metric to easily and reliably evaluate our chatbots

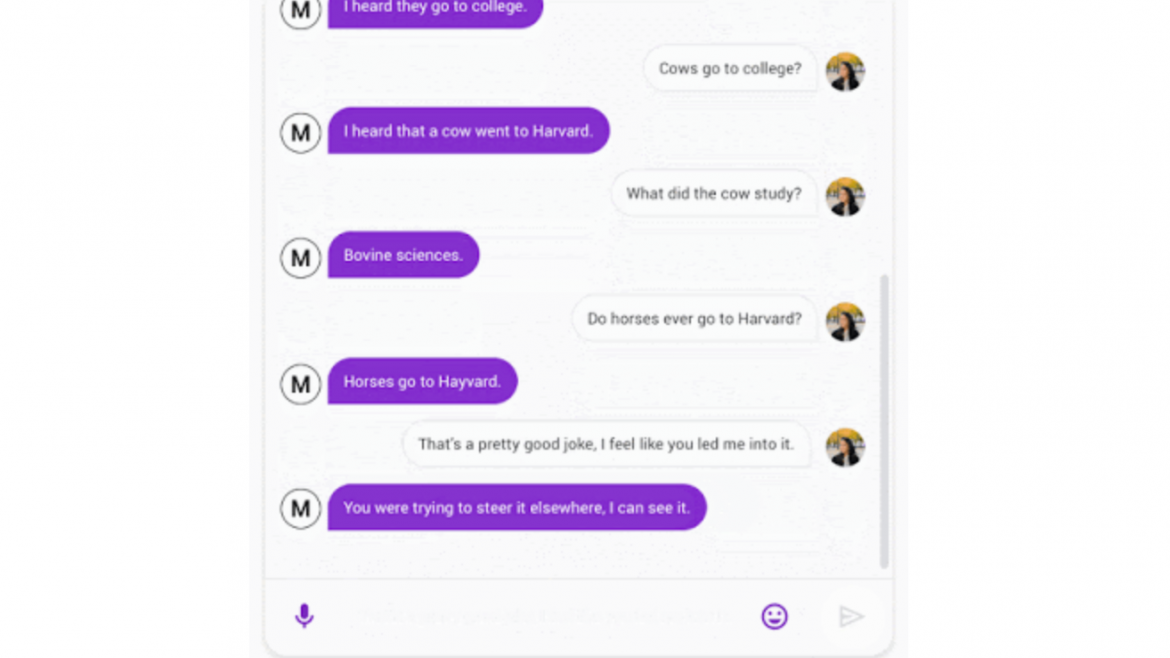

Meena outperformed all existing SOTA chatbots by large margins and it’s near the human performance The average of the fraction of responses labeled “sensible” (sensibleness of the chatbot) and “specific” (specificity of the chatbot) is the SSA score For each response, crowd workers need to answer two questions:įor each chatbot, the author collected 1600 – 2400 individual conversation turns through around 100 conversations.Hired crowd-sourced workers to label model response in order to compute SSA.Sensibleness and Specificity Average (SSA) Trained on 341GB of text data (8.5x more data than GPT-2 model) Each training example has seven turns of context as a good balance between having long enough context to train the chatbot and fitting models within memory contraints Training data are organised in a tree thread format, where each reply in the thread is viewed as one conversation turn. Get a feel of versatile and human-like interactions with Meena A chatbox that can talk like human beings. On similar lines, Google claims that its Chatbot Meena will bring a revolution in the way tech applications chat with human beings. Through hyperparameter tuning, the authors discovered that the key to higher conversational quality is a more powerful decoder The boy who avoided talking to human beings used to spend hours talking to Apple’s Siri. Uses the context vector from the encoder block to formulate an actual response It has 13 Evolved Transformer decoder blocks.Process the conversation context to help Meena understand what has already been said It has 1 Evolved Transformer encoder block.The architecture is the Evolved Transformer seq2seq.The training objective is to minimise perplexity, the uncertainty of predicting the next word in a conversation Introduced Sensibleness and Specificity Average (SSA)Ĭaptures basic but important attributes of human conversationĭiscovered that perplexity is highly correlated to SSA.Our goal is to eventually build a generalised conversational agent that can talk about anything a user wants!Ĭurrent open-domain chatbots have major flaws – they don’t make sense, inconsistent, and lack of basic knowledge about the worldĪ 2.6 billion parameter end-to-end neural chatbotĬan conduct more sensible and specific conversation that existing state of the art (SOTA) chatbots (based on SSA).It doesn’t allow the users to stray too far from expected usage Towards a Conversational Agent that can chat about… anything! ContextĬonversational agents (chatbots) are generally very specialised.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed